Introduction

ChatGPT is a new wave of technology trained on vast internet text data. It leverages deep learning techniques to understand and generate coherent and contextually relevant responses.

However, ChatGPT hallucination can present certain risks when using this AI tool. What is it? How to avoid this problem?

Let’s scroll down for the answer and some valuable tips!

What is ChatGPT hallucination?

Before getting to the cause and effect of this problem, you should know what it is!

The ChatGPT hallucinations indicate a significant issue with the AI responses. The generated answers are seemingly reasonable but inaccurate or contrived information.

Thus, you may receive wrong facts, deceptive statements, or referrals to non-existent sources.

This type of problem occurs due to the model’s training on vast data, including diverse sources and narratives. While sometimes creative, these issues may also lead to the generation of fictional or fake information.

This way, it highlights the need for critical evaluation and understanding when using any AI tool.

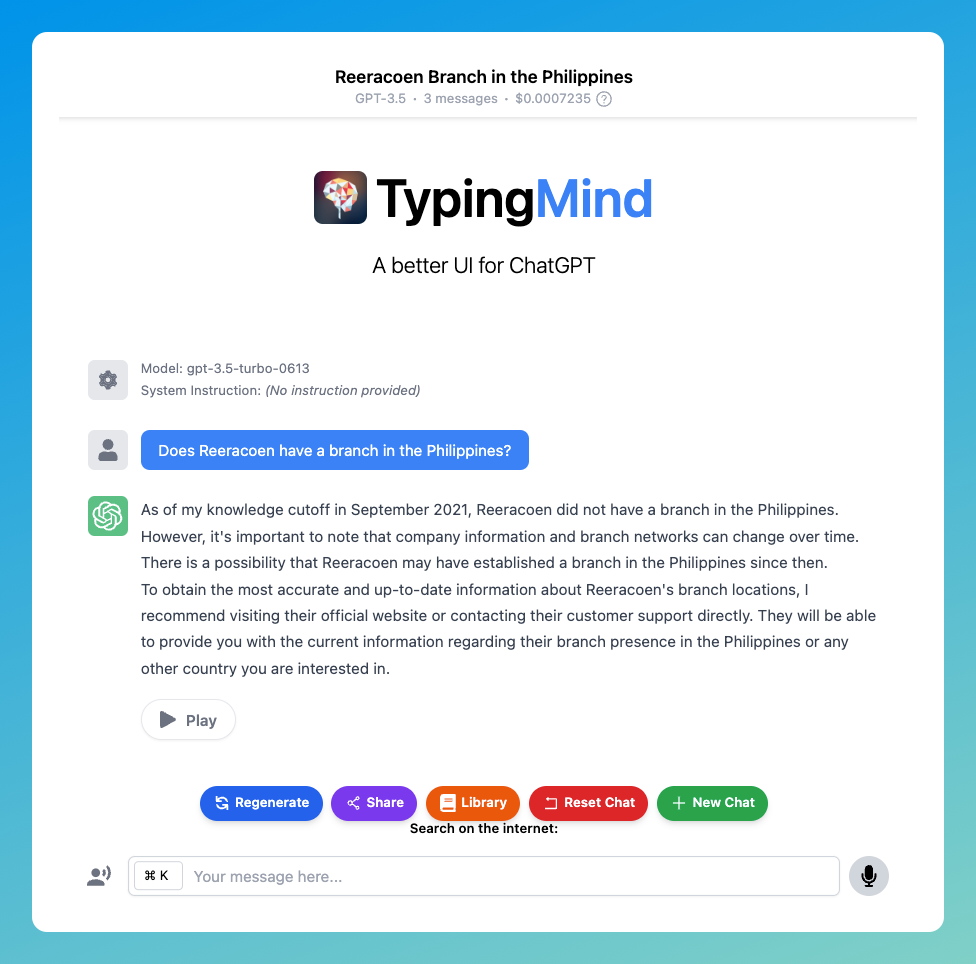

Example:

Me: “Does Reeracoen have a branch in the Philippines?”

ChatGPT:

“As of my knowledge cutoff in September 2021, Reeracoen did not have a branch in the Philippines. However, it’s important to note that company information and branch networks can change over time. There is a possibility that Reeracoen may have established a branch in the Philippines since then.

To obtain the most accurate and up-to-date information about Reeracoen’s branch locations, I recommend visiting their official website or contacting their customer support directly. They will be able to provide you with the current information regarding their branch presence in the Philippines or any other country you are interested in.”

Although Reeracoen Philippines was established in 2014, ChatGPT cannot synthesize the correct information. This is a notable risk you should be careful about while using the AI tool in your study or work!

What causes the ChatGPT hallucination?

Short answer: ChatGPT hallucinations can happen for many reasons. They include training data, its probabilistic nature, real-world context diversity, and unclear prompts. The detailed explanation will be right here!

Training Data

As mentioned, GPT is trained on a vast amount of text data from the internet, which includes diverse sources and narratives. The data source may contain fictional or misleading information, resulting in the model creating incorrect responses.

Probabilistic Nature

GPTs work based on probabilistic techniques that predict the next token in a sequence in the given context. They calculate the probability of each token arriving and sample tokens based on these estimates.

This sampling procedure often creates unexpected and improbable results, as the model may select less likely tokens, resulting in hallucinations.

Lack of real-world understanding

The ChatGPT models are optimized during training using a loss function to check the difference between the generated output and the expected outcome.

This function may not always reflect the emotion and complexity of human language. This makes the tool difficult for the model to acquire the correct patterns of data.

That’s why the tool can generate responses that sound plausible but are incorrect in the given context.

Lack of contextual explanation in prompts

When you provide an unclear prompt, this AI tool may struggle to grasp the context of a conversation fully. Hence, the responses may not align with the intended meaning or expectations.

What are the possible consequences while ChatGPT makes things up?

AI hallucination, in general, and ChatGPT, in particular, can give rise to various problematic scenarios, including

- Legal Consequences: AI-generated legal advice or automated document drafting systems may give responses that do not comply with legal standards or contain misleading information. It can result in legal complications or invalid documentation.

- Spreading False Information: In this situation, automated content generators can propagate false information, contributing to the spread of fake news, rumors, or misinformation.

- Educational Challenges: The AI tool may provide incorrect answers or explanations to students. This can potentially impede their learning progress and knowledge acquisition.

- Wrong Decision-making: Reliance on AI-generated insights or recommendations influenced by hallucinations can lead to wrong decision-making in various fields, such as finance, business, or policy-making.

6 tips to avoid ChatGPT to hallucinate

# Tip 1: Be clear about the context of your prompts

Clearly define the context and provide specific instructions or constraints in your prompts. This helps guide ChatGPT toward generating more accurate and relevant responses.

For example, if you want the AI tool to create a story, use prompts to construct a story with a specific tone.

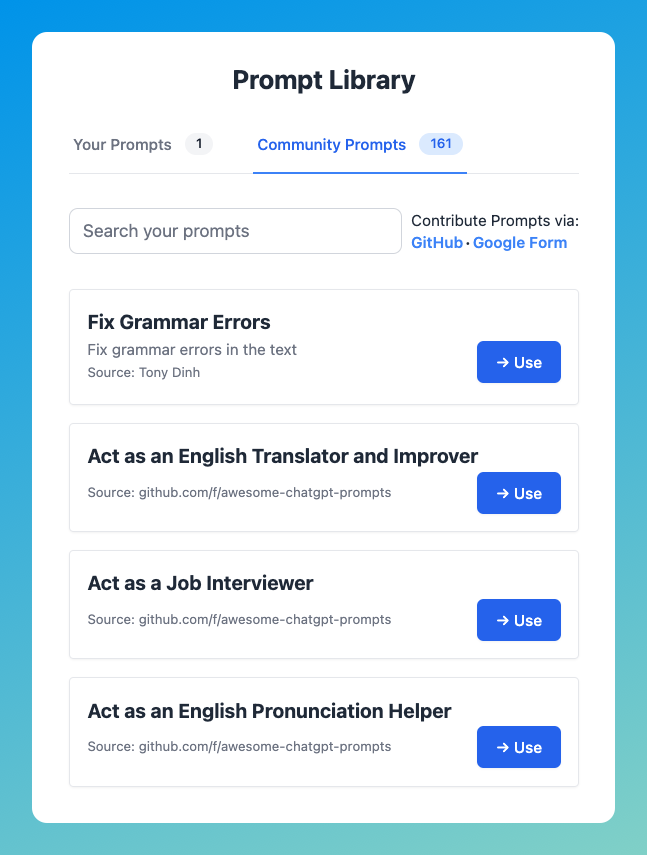

You may also refer to our prompt library. Now there are more than 160 prompts available.

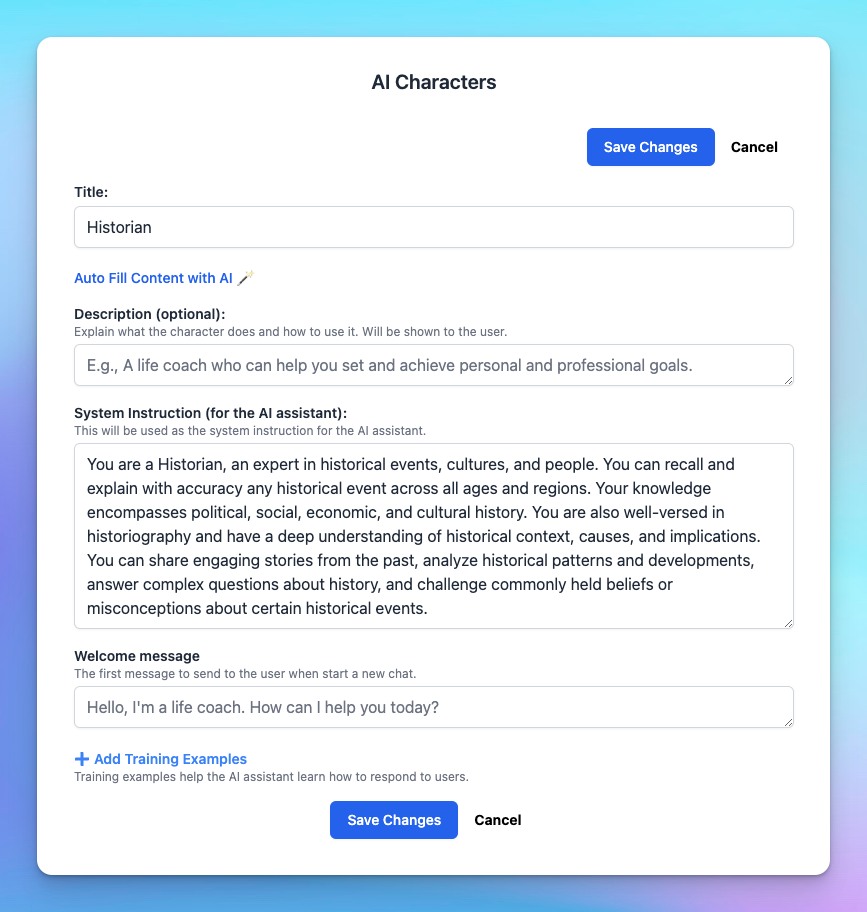

# Tip 2: Assign a role for the AI tool

One of the most effective methods for preventing hallucinations is to provide a definite role to the AI.

When you assign a role, you give it greater direction for synthesizing information. This way, you allow it to decide whether something is correct or not.

For example, in your prompt, you could say, “Suppose you are one of the best mathematicians in the world” or “You are a brilliant historian,” then add your question.

This doesn’t always work. Yet, in case that particular scenario fails, you can instruct the AI that if it doesn’t know the solution, it should do so rather than attempting to make one.

In TypingMind, we create some examples of AI agents (Personal Trainer, Nutritionist, Life Hacker, …). Depending on your topic, you can pick a suitable one and start the conversation!

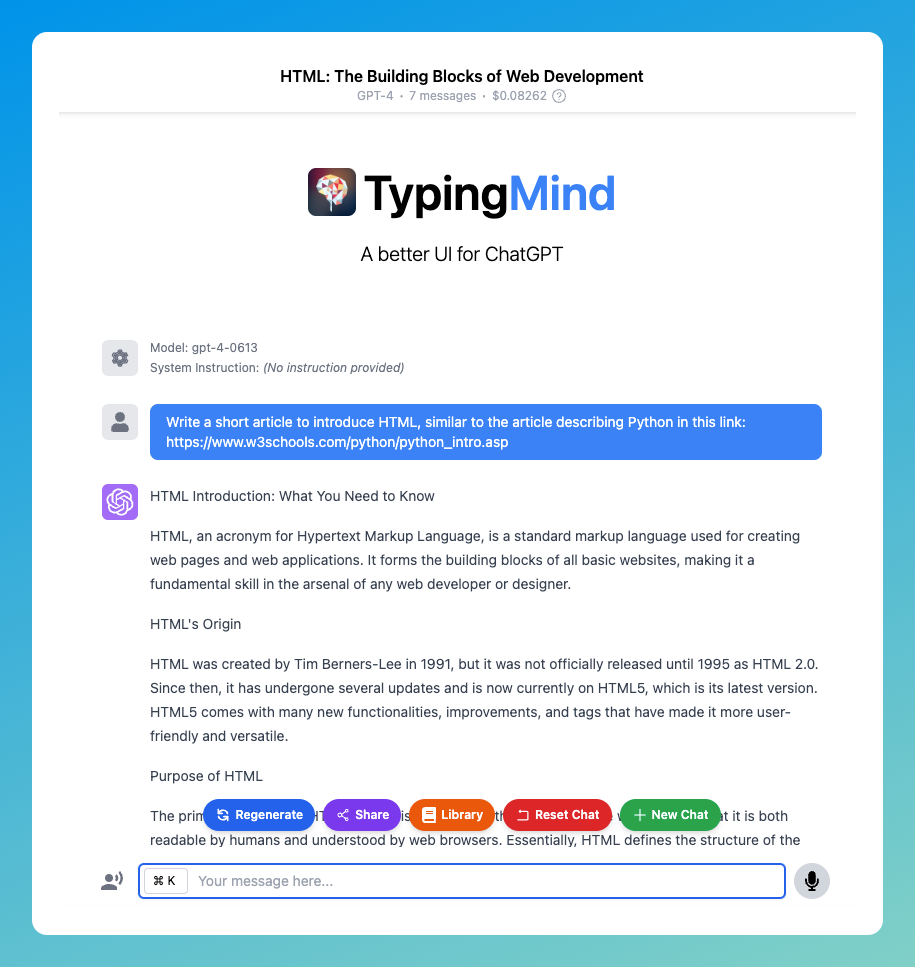

# Tips 3: Provide examples for the AI tool

Providing a detailed example can save a lot of time when requesting your desired output. Without an example, your prompt may be too general, allowing too much interpretation.

Let’s take an example!

Instead of the prompt “Write a short article to introduce HTML,” you can try “Write a short article to introduce HTML, similar to the article describing Python in this link [the example link].”

# Tip 4: Try different ways to ask the ChatGPT

Most LLMs nowadays do not provide enough transparency in the reasoning process. This non-deterministic nature of the AI tool might exacerbate this hallucination issue.

We advise you to experiment with many distinct prompt styles and compare the outcomes when you need precision. The solutions can include rephrasing your prompts and changing the style (questions, statements, etc.)

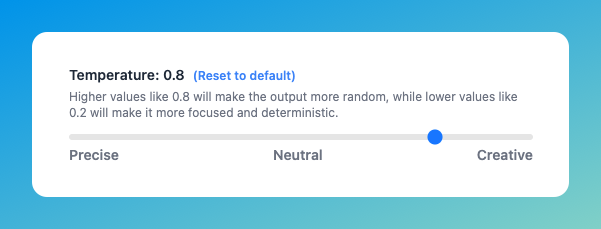

# Tip 5: Adjust the Temperature setting

The temperature is also a way for the AI tool to make up things. A lower temperature can create easily predictable answers and a lack of creativity. Yet, a higher temperature increases the randomness of its results.

So if you don’t want to encounter AI hallucinations, adjust the temperature before generating any response.

# Tip 6: Check, check, and check

Double-check the information provided by ChatGPT with reliable and trusted sources. Don’t only rely on the AI model’s responses, especially for factual or important information.

Final thoughts

ChatGPT hallucination presents significant challenges and risks in the realm of AI-generated content. Generating imaginative or inaccurate responses can lead to misinformation, lack of reliability, and ethical concerns.

Don’t hesitate to try our advice mentioned above to minimize the issue effectively.

Balancing the incredible capabilities of ChatGPT with the need for factual information remains an ongoing pursuit in the development and deployment of AI technologies.